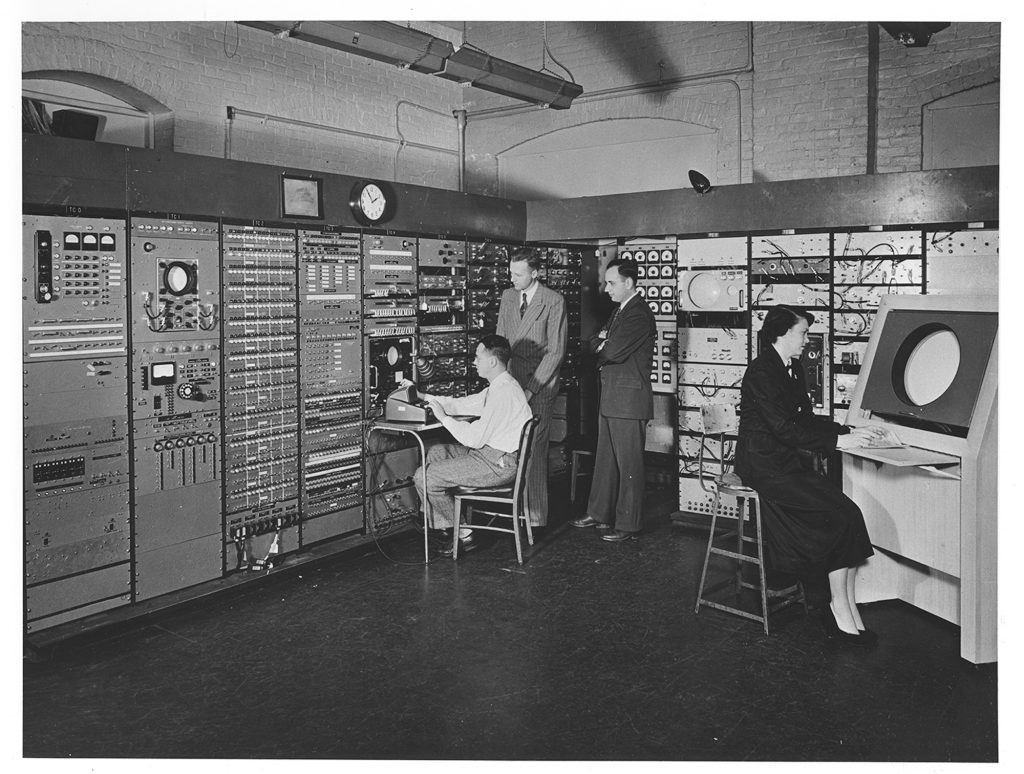

The 1952 Whirlwind, developed at MIT, the Whirlwind was a real-time digital computer designed initially for flight simulation and later adapted for national air defense. Its ability to process data in real-time was revolutionary, establishing a new benchmark for computing speed and efficiency. This experience with the Whirlwind ignited the world’s curiosity and respect for the early machines and innovators in computing.

The setting of the Computer Museum in Boston, as a place dedicated to celebrating the evolution of computers, the museum houses rare, historical machines like the Whirlwind, serving as a tangible reminder of the advancements made over decades. My work here is both personal and professional, as I seek to connect the readers with the stories of the pioneers who laid the groundwork for the digital age.

My primary goal with this series is to guide readers through the history of computing, capturing the spirit of innovation that defined the early years. I hope to bring the reades back to a time when computers were not the ubiquitous devices we know today but rather experimental machines, each unique and created for specific purposes. This series spans the 1930s to the 1950s, charting the field’s progress from conceptual ideas to practical, working machines. I want the readers to see these early pioneers not only as inventors but as individuals motivated by curiosity, vision, and the desire to solve complex problems that previously seemed insurmountable.

This series is not just about machines; it is a narrative about the people behind them. These pioneers came from various disciplines—engineering, mathematics, physics, and logic. Their backgrounds influenced their approach to computing, resulting in diverse, creative solutions to the limitations of the time. By documenting their achievements and recounting their stories, I aim to offer a comprehensive view of what it was like to invent computing during a time when such ideas were entirely new.

The 1930s was a period marked by rapid industrialization, scientific breakthroughs, and, ultimately, a global conflict that underscored the need for advanced technology. Before computers, complex calculations for engineering, military, and scientific purposes were carried out by hand or with mechanical aids. For example, calculations for bomb trajectories, weather predictions, and atomic research often required months of labor by teams of human “computers”—a term that referred to individuals who performed mathematical computations. These manual methods were limited by time and human error, making accurate, large-scale calculations challenging and unreliable.

World War II further intensified the demand for faster, more efficient computational tools. Military projects like radar, cryptography, and ballistics calculation highlighted the need for machines that could process information rapidly and accurately. Amid this demand, a few visionary individuals began experimenting with machines that could automate these tasks, freeing scientists and engineers from the constraints of manual computation. This period was both a time of crisis and creativity; the pressing needs of the time created a fertile ground for innovation, pushing scientists and engineers to think beyond traditional methods.

The Vision and Drive of Early Pioneers

BThese early pioneers were not simply inventing machines—they were reshaping the way society thought about problem-solving and information processing. Figures like Alan Turing, John Atanasoff, Howard Aiken, George Stibitz, and Conrad Zuse came from different backgrounds and geographical locations, yet shared a common drive to overcome the limitations of existing technology. Each of them, working independently and often with limited resources, began to explore the possibility of building machines that could perform mathematical calculations autonomously.

For instance, Alan Turing, a British mathematician, proposed the concept of a “universal computing machine” in 1936, envisioning a device capable of performing any calculation given the right instructions. His work introduced foundational ideas about algorithms and computability that are still central to computer science today. In the United States, John Atanasoff and his assistant Clifford Berry worked on the Atanasoff-Berry Computer (ABC), designed specifically to solve linear equations. Atanasoff’s work laid the groundwork for binary computation, using electronic components to create a machine capable of handling mathematical operations far faster than human efforts. Howard Aiken at Harvard, inspired by Charles Babbage’s early 19th-century designs, developed the Harvard Mark I, a machine capable of handling complex mathematical functions and one of the first large-scale digital calculators.

A Global Effort with Local Roots

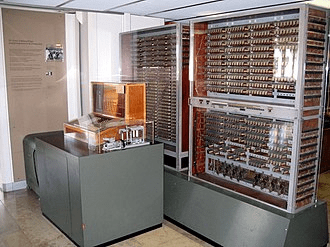

This innovation was happening simultaneously in different parts of the world. In Germany, Conrad Zuse worked in his Berlin apartment to construct the Z1, a mechanical computer that would eventually lead to the fully functional Z3, the first programmable computer. Meanwhile, at Bell Labs in the U.S., George Stibitz was experimenting with relay circuits and binary logic, constructing what would be one of the earliest examples of a relay-based computer.

Each pioneer was driven by unique motivations and challenges, from solving specific scientific problems to advancing military technology. Bell’s series underscores that computing’s early years were not the product of a single invention or individual but a cumulative effort from thinkers who, despite their differences, shared a vision of a future where machines could think and compute independently.

Importance of Preserving Computing’s Origins

The importance of remembering and preserving these stories as a way to honor the work and dedication of early computer pioneers. Understanding the origins of computing provides essential context for today’s technological landscape. While modern computers are vastly more powerful and versatile, they are built on the principles these pioneers established. Concepts like binary arithmetic, logic gates, and programmable memory—ideas that originated in the work of early computer scientists—are still fundamental to computer design today.

In a world where computing technology is ubiquitous, it can be easy to take for granted the work that led to its creation. I want the readers to see that computing is not merely a technical field; it is a story of human ingenuity, perseverance, and the desire to push beyond known boundaries.

This journey is as much about the machines as it is about the people behind them. At the end of this series, the readers will appreciate the beauty and ingenuity of early computing technology, recognizing that these pioneers operated with limited resources and in many cases had to invent not only the machines but also the mathematical and logical principles behind them. Through this series, I hope to preserve their legacy, ensuring that their contributions to the field of computing are celebrated and remembered as integral to the development of the modern digital world.

Leave a comment